If I can paraphrase a cliché:

bad things happen to good programs

Things go wrong, even in the most carefully coded and thoroughly tested applications. In my opinion, a sign of a good app is if it gives meaningful and useful error messages when things go wrong.

At Cypress we spend a lot of time trying to make every type of error we display to the user as useful as possible. These include failed assertions during tests (after all, Cypress is an end to end test runner), and crashes inside our application.

Useful unit test assertion failures

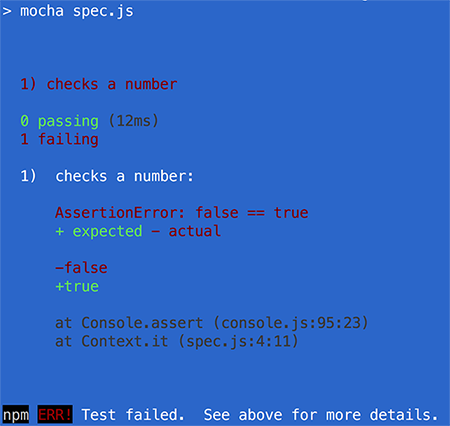

Let me start with showing an example of a typical failed test message that is NOT user friendly. Let’s use the Mocha test runner and plain console.assert to verify that a result is equal to an expected value.

it('checks a number', () => {

const value = 3

const expected = 3

console.assert(value === expected)

})

The test passes; no big surprise there. Let us change the value to be “wrong” on purpose to see how the test reports its failure.

it('checks a number', () => {

const value = 10

const expected = 3

console.assert(value === expected)

})

The npm test command fails, and suggests that we look at the text above for details. Yet, aside from the stack frame where the assertion fails Context.it (spec.js:4:11), there is almost no useful information! We have to open the file spec.js at line 4 ourselves, trace the values used in the console.assert expression and try to guess what went wrong in the test.

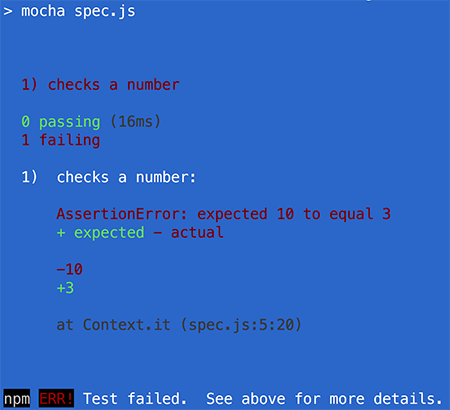

We can provide more information ourselves by using a library of matchers - small functions that compare values and throw detailed error messages. A popular library like chai can make our test code a lot more readable, and our test failure a lot more useful.

const expect = require('chai').expect

it('checks a number', () => {

const value = 10

const expected = 3

expect(value).to.equal(expected)

})

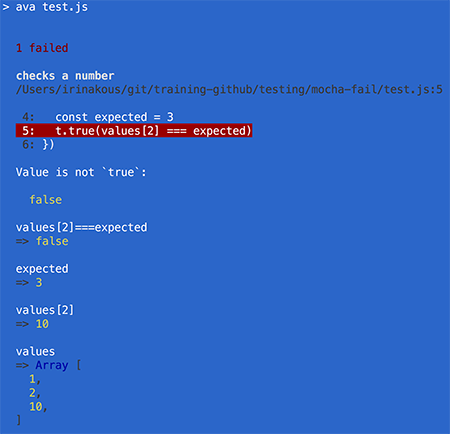

Yet, we could make another step towards more clarity in test output. Imagine we are comparing an item from an array with an expected value. Wouldn’t it be super useful to see the entire array automatically if the test fails? We could manually specify all the things to print in case of failure - but that is a long list! Or we could automatically transform the test code to instrument it. For example, the test runner Ava makes its assertions more powerful via the power-assert module.

import test from 'ava'

test('checks a number', t => {

const values = [1, 2, 10]

const expected = 3

t.true(values[2] === expected)

})

Note the wealth of useful information shown automatically. It’s almost everything a developer might need to debug the failure:

- Failed test source file and line number

- The test source line itself

- The expression that failed the

t.truepredicate - The left and right values in the strict equality comparison

values[2] === expected - The “outer” values of all variables participating in the expression that failed. In our case the entire

valuesarray is printed

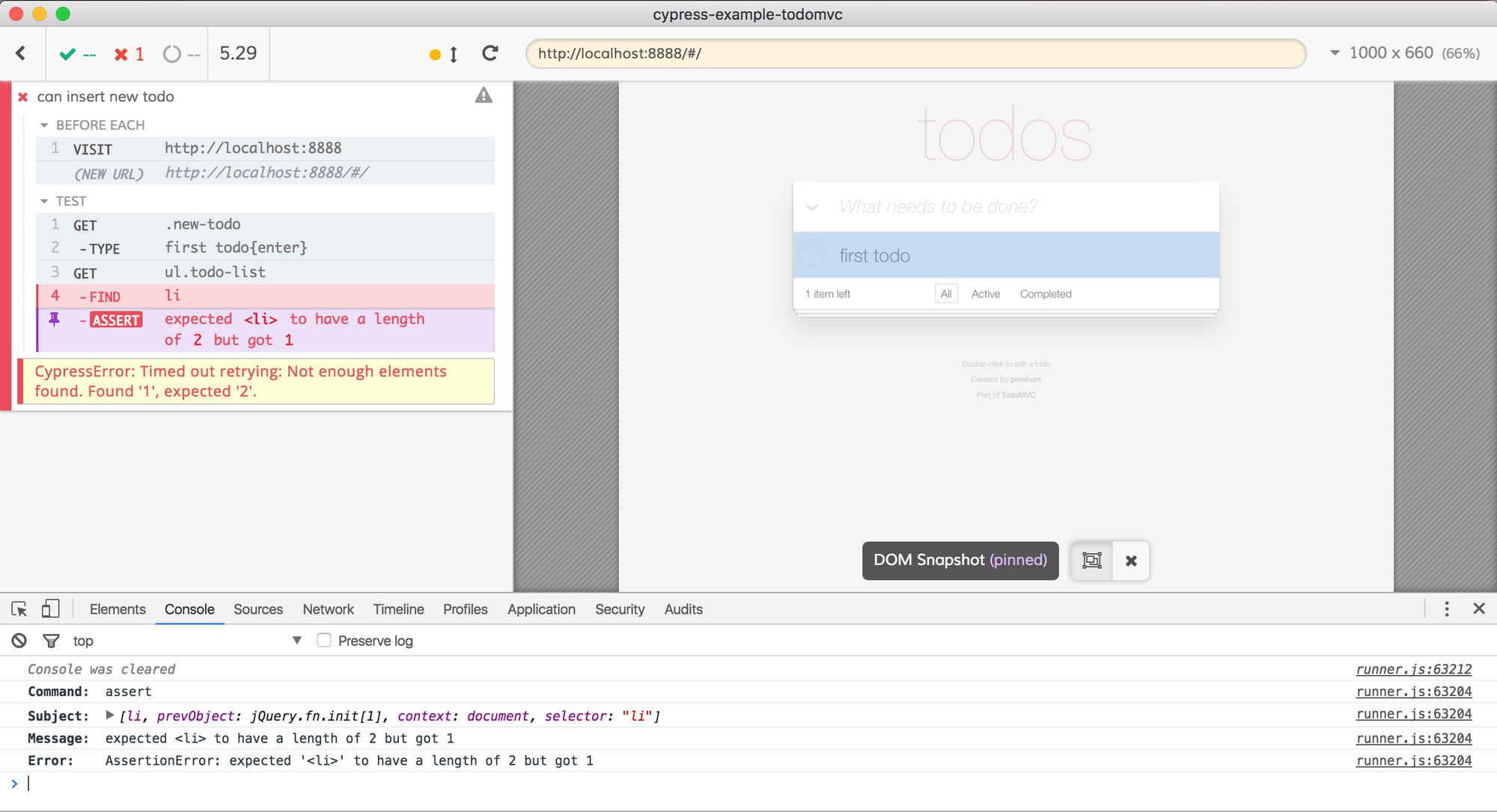

Useful E2E assertion failures

Cypress.io is an end to end test runner and operates at the web application’s user interface level. So when a test fails there are plenty of things to record and display. A screenshot is taken automatically on failure, but we also pay close attention to the information coming from the matcher function. Our goal is to describe the expected outcome of the step that failed and to output all relevant UI information at the moment of failure.

Imagine testing the TodoMVC application and checking if the ToDo list has 1 element. The end to end test looks something like this:

beforeEach(() => {

cy.visit('http://localhost:8888')

})

it('can insert new todo', () => {

cy.get('.new-todo').type('first todo{enter}')

cy.get('ul.todo-list').find('li').should('have.length', 1)

})

Let us change the test to expect 2 elements (but we still insert only 1).

it('can insert new todo', () => {

cy.get('.new-todo').type('first todo{enter}')

cy.get('ul.todo-list').find('li').should('have.length', 2)

})

The test will fails, and the application will show the following:

You can find the full set of Cypress TodoMVC tests in the cypress-example-todomvc repository.

Right away, the developer sees all the steps in the test in the Command Log on the left. Instead of the last stack frame, we see that the GET ul.todo-list step was successful. Then, after the FIND li step, it expected to find 2 elements in the list, but found just one.

We work hard to make sure if any of the many Cypress API commands fail during the test it will generate similarly helpful message. Our goal is to give enough information to the developer so that they can diagnose the problem immediately by looking at the Command Log’s output, maybe even without rerunning the tests again.

Good error messages and recording the video of the browser is especially important when the tests execute on CI. You can store the videos as artifacts, just like the CircleCI is configured in the repo cypress-example-todomvc.

Alternatively, you can send the screenshots and videos to Cypress and inspect the entire test run history in your Dashboard. Just pass the --record <key> option when running on CI.

All the useful information for successful and failed tests in a single place.

Useful CLI errors

Now let’s look at some typical server-side / CLI crashes. Imagine you run a program that tries to read a file.

const fs = require('fs')

function foo() {

fs.readFileSync('./important.json', 'utf8')

}

foo()

If the file does not exist, the CLI application crashes with a stack trace:

$ node index.js

fs.js:640

return binding.open(pathModule._makeLong(path), stringToFlags(flags), mode);

^

Error: ENOENT: no such file or directory, open './important.json'

at Error (native)

at Object.fs.openSync (fs.js:640:18)

at Object.fs.readFileSync (fs.js:508:33)

at foo (/examples/simple/index.js:3:6)

at Object. (/examples/simple/index.js:5:1)

at Module._compile (module.js:570:32)

at Object.Module._extensions..js (module.js:579:10)

at Module.load (module.js:487:32)

at tryModuleLoad (module.js:446:12)

at Function.Module._load (module.js:438:3)

Note that a casual user DOES NOT CARE ABOUT STACK TRACE. Not a single bit. The user only cares about one thing:

How do I make this work?

We, developers, might want a stack trace in the error report submitted by a user, but the user only needs to know what to do in case this error happens.

Some users might read the error message and see the meaningful part of the error no such file or directory, open './important.json', but that is rare. Even in this case, it is unclear what the user should do; is the file expected to be there by default? Or is the user supposed to create the file first?

There is a mismatch between what the author of the software wants: stack trace, verbose error information, and what the user wants: limited and actionable error message. This is why we have written @cypress/error-message - a way to handle expected crashes and display a user-friendly message with plenty of context. In the same situation as above, we expect the possibility of a non-existent file and advise the user what to do, including reading information online.

const {formErrorText} = require('@cypress/error-message')

// we expect the following error

const fileReadError = {

description: 'Failed to read settings file',

solution: `

Please create file "important.json" with settings first,

then run the program again.

See more info at https://faq.company.com/setup

`

}

const fs = require('fs')

function foo() {

try {

fs.readFileSync('./important.json', 'utf8')

} catch (err) {

formErrorText(fileReadError)(err).then(console.error)

}

}

foo()

When running, this program shows a message the the user will find useful.

$ node after.js

----------

Failed to read settings file

Please create file "important.json" with settings first,

then run the program again.

See more info at https://faq.company.com/setup

----------

ENOENT: no such file or directory, open './important.json'

----------

Platform: darwin

Version: 15.6.0

There is a human-friendly error description and a proposed solution, not your typical Node.js mumbo-jumbo.

If the formErrorText call syntax looks weird, it is because we curry the function to separate its configuration from error handling. This makes it very easy to plug message formatting into promise chains.

const errorInformation = {

description: '...',

solution: '...'

}

fooAsync()

.catch(formErrorText(errorInformation))

We open sourced this library on NPM as @cypress/error-message and are using this internally in all our CLI code. The stack traces without meaningful messages should only happen in truly unexpected crashes.